Three camps in AI coding toolingwhere the empirical evidence keeps pointing

Until recently, the central question in AI-assisted development was whether models could write code. They can. So the question changed.

The new bottleneck isn't intelligence. It's coordination. AI coding sessions forget what happened in the previous session. Plans drift mid-execution. The agent confidently writes a SQL migration that would have wiped a column in production.

None of those failures are model-IQ problems. All of them are operational problems. And operational problems get solved with operational infrastructure, not bigger models.

That is why the AI coding ecosystem is suddenly producing dashboards, orchestrators, memory systems, review pipelines, MCP servers, and .md conventions at an extraordinary rate. The center of gravity has moved from the model to the system around the model.

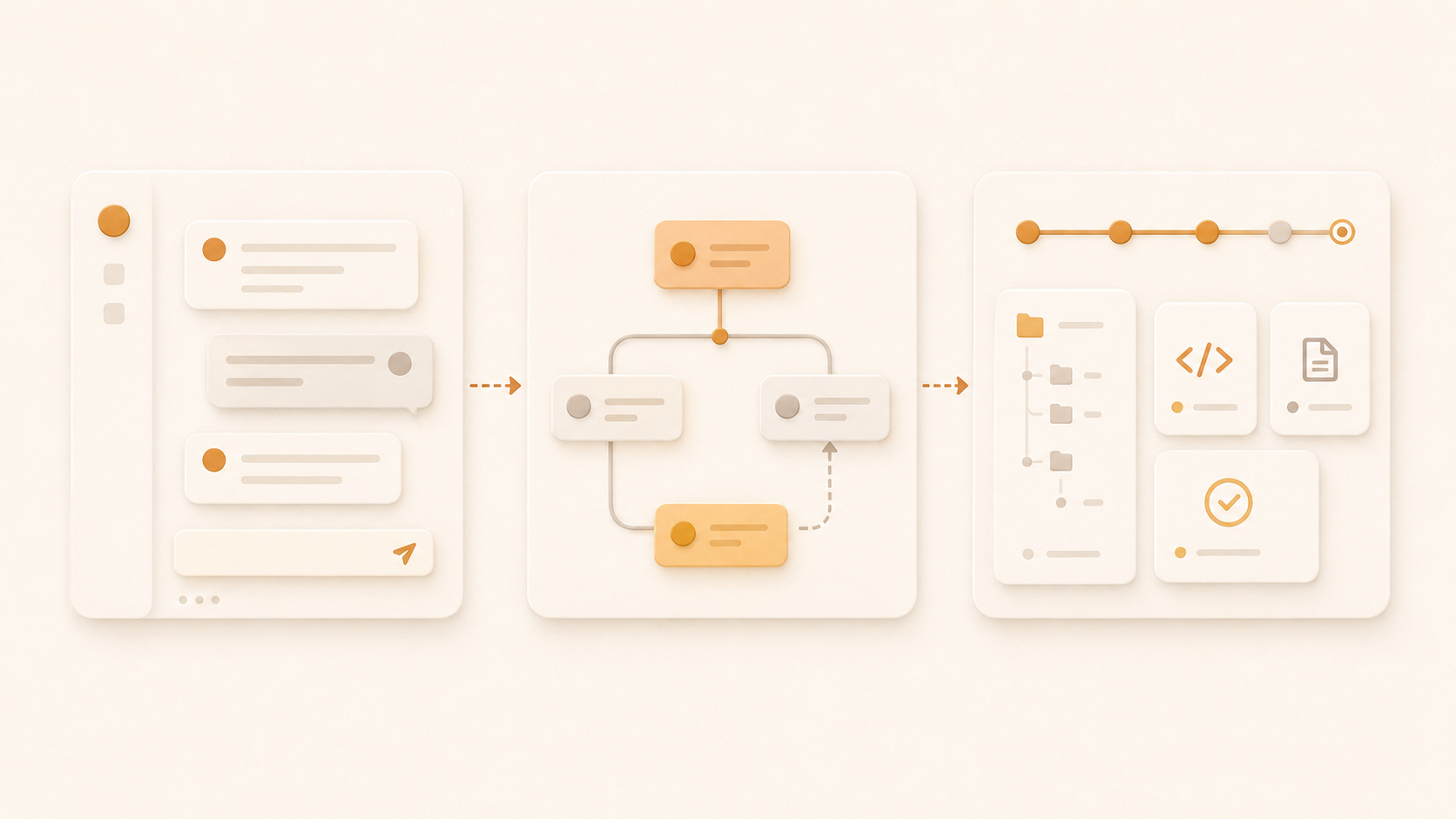

Three philosophical camps are emerging. Most of the attention is on the first two. The third is the one that keeps appearing in the empirical data.

A study of nearly 3,000 repositories found that when developers adopt AI-coding configuration at scale, they reach for files in the repo, not the more advanced runtime mechanisms. Among eight options measured, plain context files won.

01 / The model in your IDE

This is what most developers see first. Cursor. Copilot. Windsurf. The model lives inside an IDE. You write a prompt; it edits the file.

It optimizes for zero-friction onboarding and individual productivity per session. What it doesn't solve: durable state across sessions, supervision of long-running work, audit trails. Even with persistent rules and project instructions, the default interaction model remains session-scoped. Wins for focused individual work; struggles when the work is multi-session.

02 / AI as distributed workflows

LangGraph, CrewAI, AutoGen, OpenHands. The system is a runtime, usually a graph of agent nodes with explicit state, retries, branching, and observability. Everything happens inside an orchestration engine.

It optimizes for production reliability, durable execution, multi-agent coordination, observability at runtime. What it doesn't naturally solve: repo-native portability of state, low-friction adoption, human-readable operational memory that travels with the code. State lives in the runtime; the repo is just where the code is. Wins for production agent systems where reliability is the dominant constraint; struggles when the user wants to inspect what the system did without tracing through a graph engine.

03 / The repository becomes the substrate

CLAUDE.md and AGENTS.md conventions. The .cursor/rules pattern. GitHub's spec-kit. Tools like Storybloq. The system is the repository itself: files, commits, conventions, structured artifacts inside the repo. The runtime is whatever AI client the developer is using; the substrate is git.

It optimizes for human-readable state, machine-readable state, durability across model churn, no SaaS lock-in, inspectability, diffability. What it doesn't try to solve: heavyweight runtime orchestration, enterprise governance UI, hosted multi-tenancy.

If the repository is already where humans coordinate engineering work, it should also be where agents coordinate. Don't invent a parallel system. Use the one engineering teams have used for decades. This camp is still early as a product category, but when researchers look at what developers actually adopt, this is where the empirical evidence keeps pointing.

04 / What 3,000 repositories actually do

In February 2026, academic researchers published a study analyzing nearly 3,000 repositories that use Claude Code, GitHub Copilot, Cursor, Gemini, and Codex. They examined eight different configuration mechanisms: slash commands, MCP servers, sub-agents, skills, hooks, settings, prompts, and context files. Their finding:

Context Files dominate the configuration landscape... AGENTS.md functions as an interoperable standard.

Among the eight mechanisms they studied, the one developers actually adopt at scale is the one that stores context as files in the repo. Not the more advanced mechanisms like Skills and Subagents (which the paper notes are "only shallowly adopted"). Plain context files in the repo.

A separate study from January 2026 analyzed 42,000 commits and 4,700 resolved issues across eight major multi-agent frameworks (LangChain, CrewAI, AutoGen, and others). They categorized every issue. The top categories were not model intelligence problems. They were bugs (22%), infrastructure (14%), and agent coordination (10%). Combined: nearly half of all reported issues in multi-agent AI systems are operational, not model-quality.

A third paper from April 2026 dissected Claude Code's architecture and found that some of its most complex internals, including a five-layer compaction pipeline for context management, exist specifically to manage context pressure and preserve usable working context. Even the model provider's own coding client treats context preservation as a hard architectural problem worth dedicating significant engineering to.

The pattern across all three is the same. Where the field is putting its engineering energy now is the layer above the model: context, state, coordination, reliability. The evidence doesn't prove what will survive, but it does point to a durable primitive: the substrate that lives where engineering teams already live, the repo.

05 / What that looks like in Storybloq

Storybloq's bet is specific. Inside every project, a .story/ directory holds:

Tickets: planned work, one JSON file per item, with explicit fields for status, phase, dependencies, parent/child relationships.

Issues: discovered bugs and gaps, with severity, impact, components, related tickets.

Handovers: narrative markdown documents written at the end of each significant session, preserving decisions, blockers, and what's next.

Lessons: graded patterns the project has learned over time, with reinforcement counts and last-validated dates.

Roadmap phases: the structural shape of the project, ticket dependencies derived from canonical fields.

All git-tracked. All readable by compatible AI clients through MCP tool calls, or by a human in any text editor. No database, no SaaS account, no lock-in.

Our evidence is practical, not theoretical: we use Storybloq to develop Storybloq. The workspace repo currently holds 289 tickets across 16 phases (233 of them complete), 265 session handovers, and 18 active lessons. Most of that history is private, but the artifacts that come out of it (commits, releases, the public CLI source) all came from sessions that began by reading that accumulated state and ended by updating it.

Early third-party usage points in the same direction. An external operator running Storybloq's autonomous mode on a separate project monitored 19 ticks of a 9-commit run and reported back:

Ticket vs issue is the right model. The agent honored this discipline, filing 8 issues during the run for things out of scope rather than fixing them in-flight... The product gets out of my way and gives me the right primitives.

That sentence, "gets out of my way and gives me the right primitives," is the entire pitch of the camp 3 architecture. The substrate works because it doesn't try to be the runtime. It just makes sure the right state survives whatever runtime the developer happens to be using.

06 / Adjacent work in the same camp

Storybloq is not alone in this camp. The heaviest institutional weight is on GitHub's own spec-kit (95,000+ stars at the time of writing). Spec-kit is a spec-first delivery framework: each new feature gets a templated specs/###-feature/ folder with structured requirements (User Stories, Functional Requirements, Acceptance Scenarios), a tech plan, and a task breakdown, plus a /speckit.implement command that executes the plan. The bet is that specifications are the executable artifact and code is generated from them.

Storybloq's bet is different. Where spec-kit captures what to build for a new feature, Storybloq captures what is happening across the whole project lifecycle: tickets, issues, handovers, lessons, session-to-session continuity. Spec-kit is spec-driven; Storybloq is state-driven. The two stack naturally: spec-kit for spec-to-code on a new feature, Storybloq for session-to-session continuity across many features. Different layers, same camp.

The convention layer is part of this camp too. CLAUDE.md, AGENTS.md, .cursor/rules, and similar files all point to the same architectural insight: project context lives in the repo, in plain text, where humans and agents can both read and write it. The Galster paper found these context files dominate adoption, which is the empirical support for the repo-native argument.

The category is forming around a shared substrate idea: the repository is the right place for AI engineering memory. Storybloq's bet is to turn that idea from loose convention into structured operational memory, complementing the spec-driven approach without competing with it.

07 / Where this bet might be wrong

It would be dishonest not to name the limits.

This approach requires workflow discipline. If teams don't write handovers, don't update ticket status, and don't file issues when they discover gaps, the .story/ directory becomes useless quickly. The system's strength assumes the operator wants the discipline. Some don't.

It's not optimized for enterprise governance UIs. Centralized compliance pipelines, hosted policy enforcement, and CIO-grade portfolio dashboards are problems hosted platforms solve better than file-based systems do.

But that does not make repo-native systems "small-team tools." Git already scales from solo projects to the largest engineering organizations in the world. The distinction is not team size. The distinction is where agent-facing engineering memory should live.

Storybloq's bet is that the memory an agent needs to do good engineering work belongs close to the code, where it can be inspected, reviewed, diffed, versioned, and carried across tools. Jira can remain the organizational planning layer. The repo should become the operational memory layer.

It's also vulnerable to native shipping. If Anthropic, OpenAI, or another major model provider ships persistent project memory natively into their coding clients, the camp 3 tools become thinner, possibly to the point of being absorbed into convention. The mitigation is that file conventions are open: if the providers converge on .story/ or something like it, the format wins as a standard even if specific implementations get displaced.

And the substrate doesn't make AI infallible. It makes mistakes recoverable, decisions auditable, and context preserved. It catches the "about to break the database" class of mistake more reliably than ad-hoc workflow. It does not eliminate the failure mode where the operator told the agent to do the wrong thing in the first place.

08 / What we're building next

Most of Storybloq's roadmap is moving deeper into the camp 3 thesis. The next phase is synthesis: not just storing project state but reading across it. Cross-handover synthesis to surface what a project has actually learned over months, not just what happened last session. Cross-artifact relevance to surface the right lessons at the moment they apply, not at the moment a human remembers to look. Trust calibration in the Mac app so a human watching an agent work can develop accurate intuition about when to trust it more or less.

These features are only possible because the substrate is structured. Memory tools that store arbitrary facts cannot synthesize project narrative across sessions; their primitive is optimized for a different problem. Runtime orchestration systems can synthesize execution graphs but not human-readable engineering history; their substrate is optimized for a different audience. The repo-native camp can do both because the substrate matches both audiences.

Three camps. Three bets on what becomes the substrate. The evidence doesn't prove the repo-native camp will win. But when developers need AI agents to remember project context, they keep reaching for files in the repo. We're betting that's not a coincidence.